HiSilicon's 2025 AI SoCs and Trusted Execution

HiSilicon's 2025 AI SoCs integrate a hardware-isolated Trusted Execution Environment (TEE). This system delivers end-to-end

HiSilicon's 2025 AI SoCs integrate a hardware-isolated Trusted Execution Environment (TEE). This system delivers end-to-end security for AI models and data. Its key innovation is a tightly coupled hardware and software architecture, creating a trusted foundation for low-latency AI execution. This specialized design contrasts with general-purpose TEEs, which often introduce significant performance overhead for AI workloads.

| TEE Type | Scenario | Throughput Overhead | Latency Overhead |

|---|---|---|---|

| SGX | Single-Socket (Llama2) | 4.80-6.15% | < 20% |

| TDX | Single-Socket (Llama2) | 5.51-10.68% | < 20% |

| TDX | Multi-socket | 12.11-23.81% | N/A |

| SGX | Multi-socket (large models) | N/A | Up to 230% |

Key Takeaways

- HiSilicon's new AI chips have a special secure area. This area protects AI models and data very well.

- The chips use a 'Security by Design' plan. This means security is built in from the start, not added later.

- The system protects AI models when they load and run. It uses strong encryption and checks to keep them safe.

- These chips have special hardware for security tasks. This makes security fast and does not slow down AI work.

- The chips protect against many attacks. These include physical attacks and software hacks.

SECURITY BY DESIGN: A HOLISTIC APPROACH

HiSilicon embeds security into every stage of SoC development. This "Security by Design" philosophy moves beyond just performance. It prioritizes a safer and more secure foundation for AI devices, from silicon IP blocks to firmware. This holistic approach is critical for building confidence in modern AI and IoT systems. The architecture's security rests on three core pillars.

THE SECURE BOOT AND CHAIN OF TRUST

The system establishes trust from the moment it powers on. A secure boot process creates an unbroken "chain of trust." This process begins with a hardware-based root-of-trust containing immutable cryptographic keys. This root verifies the digital signature and hash of the next software component before allowing it to run. Each validated component then verifies the next one in the sequence. Any failure at any stage halts the boot process immediately. This step-by-step verification ensures that the device only executes authentic, trusted code.

HARDWARE-LEVEL ISOLATION FOR AI

The SoC provides robust hardware-level isolation for AI workloads. It uses internal Memory Management Units (MMUs) to create protected memory zones for the AI accelerator. This hardware barrier prevents other system processes from accessing or corrupting the AI model and its sensitive data during operation. The system also configures Direct Memory Access (DMA) to enforce these boundaries, ensuring that data flows only between authorized components. This strict separation is essential for a secure trusted execution environment.

DEDICATED CRYPTOGRAPHIC ACCELERATORS

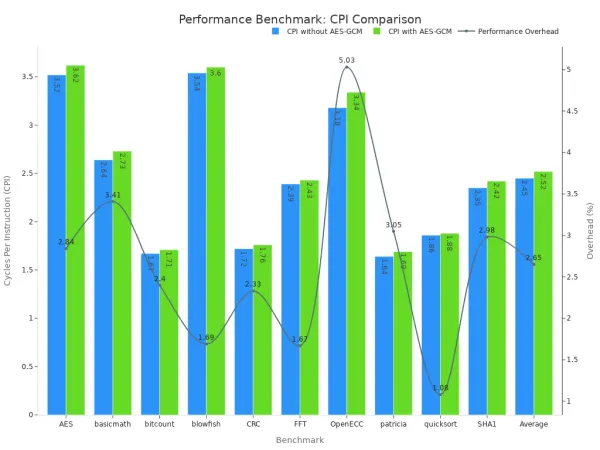

Strong security requires efficient cryptography. HiSilicon's SoCs integrate dedicated cryptographic accelerators to handle intensive calculations. These hardware blocks perform operations like hashing and encryption far more efficiently than a general-purpose CPU. This approach minimizes performance penalties, which is crucial for low-latency AI and IoT applications. The data shows that this hardware-assisted security has a minimal impact on overall performance.

As shown, the average performance overhead from cryptographic operations is only 2.65%, confirming the accelerator's efficiency.

FOUNDATIONS OF TRUSTED EXECUTION

The secure boot process builds a trusted foundation. The system then uses this foundation to protect AI models and data during operation. This trusted execution environment relies on several key pillars to maintain security from loading to inference.

SECURE MODEL LOADING AND EXECUTION

An AI model's intellectual property is most vulnerable when being loaded into memory. HiSilicon's TEE uses a multi-stage process to load and run models without exposing them. This secure execution workflow ensures the model's integrity and confidentiality.

- Attestation and Key Exchange: The secure enclave first proves its identity to a remote Key Management Service (KMS). After verifying the enclave is genuine, the KMS sends it the necessary decryption keys.

- Model Provisioning: The encrypted AI model is streamed to the device. The model remains encrypted until it is inside the protected memory of the TEE.

- Data Ingestion: The enclave also proves its identity to the client device. The client then encrypts its input data before sending it. The enclave decrypts the input, performs the inference, and re-encrypts the output.

- Secure Decryption: The AI model is only decrypted within the TEE's protected memory. This step ensures the model's weights and architecture are never exposed in plaintext outside the secure zone.

Verifying Integrity Before Execution The system uses multiple attestation methods to confirm its integrity. These checks include control-flow attestation to verify software execution paths and device-specific watermarks embedded within the AI model. Remote attestation confirms that security features are active and the environment has not been tampered with before any sensitive information is processed.

REAL-TIME MEMORY ENCRYPTION

Once loaded, the model and its associated data remain protected. The SoC performs real-time memory encryption for all operations within the TEE. It uses dedicated hardware engines to encrypt and decrypt data as it moves between the AI accelerator and system RAM. This process is similar to the memory protection features found in advanced confidential computing technologies like AMD SEV-SNP and Intel TDX.

This hardware-first approach is critical for performance. Software-based encryption creates significant bottlenecks, slowing down AI tasks. HiSilicon's design avoids this by using dedicated crypto cores. These accelerators handle encryption with minimal CPU load and latency, a vital feature for real-time AI applications in IoT and edge devices. This efficiency ensures that strong security does not compromise the speed of data processing.

PREVENTING A DATA BREACH

The ultimate goal of the TEE is to prevent a data breach. It achieves this by creating an impenetrable barrier around AI workloads. Even if an attacker gains control of the main operating system, they cannot access the code or intermediate data inside the TEE. This robust security model protects user privacy and the model's intellectual property.

The TEE's design prevents a data breach by enforcing four key principles:

- Isolation: Code and data are completely separated from the rest of the system.

- Confidentiality: All computations are encrypted and only accessible within the TEE.

- Integrity: The system ensures that code and data are not modified by any outside threat.

- Attestation: It provides cryptographic proof that the TEE is genuine and running trusted code.

Together, these features create a secure vault for AI operations. They protect the entire lifecycle of sensitive information, from encrypted inputs to the final encrypted results. This comprehensive protection is essential for building trust in next-generation AI and IoT systems.

COMPARING TRUSTED EXECUTION ENVIRONMENTS

HiSilicon's architecture provides a specialized trusted execution environment. This design differs significantly from general-purpose solutions. Understanding these differences highlights the system's advantages for modern AI workloads. The comparison focuses on performance, security, and developer integration.

PERFORMANCE ADVANTAGES IN AI

General-purpose trusted execution environments (TEEs) often struggle with the demands of AI. Systems like Arm TrustZone create a secure world separate from the normal operating system. This separation is effective but can introduce performance penalties. Each time data or control passes between the normal and secure worlds, the system performs a "context switch." These switches add latency, which is a major problem for real-time AI.

HiSilicon's design minimizes this overhead. It uses a co-design approach where the AI hardware and the TEE are tightly integrated. This architecture reduces the need for frequent context switches.

- Reduced Latency: The system processes AI tasks directly within the secure hardware. This avoids the performance cost of moving data between separate security domains.

- High Throughput: Dedicated cryptographic accelerators handle encryption and decryption. This offloads work from the main CPU, allowing it to focus on other tasks.

- Optimized Data Paths: The SoC configures DMA channels for secure, direct data flow between memory and the AI accelerator. This eliminates bottlenecks found in less integrated systems.

This specialized approach gives HiSilicon's trusted execution a clear performance edge over general-purpose TEEs for AI inference. The design prioritizes low-latency processing from the ground up.

ENHANCED SECURITY FEATURES

Many existing TEEs have faced security challenges over the years. Researchers have discovered vulnerabilities related to side-channel attacks, memory access patterns, and software bugs. HiSilicon's design learns from these past issues. It incorporates specific countermeasures to address known vulnerabilities and strengthen its security posture.

For example, some attacks on the TrustZone architecture have exploited shared resources like system caches. HiSilicon's hardware-level isolation creates stricter boundaries around the AI accelerator's memory and processing units. This separation makes it much harder for an attacker in the normal world to observe or influence operations inside the secure enclave. The design directly addresses existing vulnerabilities in TEEs by implementing stronger hardware partitions.

Proactive Defense The architecture does not just create a wall; it actively defends against specific attack vectors. It includes hardware features to randomize memory access timing and obfuscate power consumption patterns. These features are crucial for protecting against sophisticated physical attacks. This proactive security model is a key differentiator.

INTEGRATION WITH AI DEVELOPMENT

A powerful security system is only effective if developers can use it easily. Integrating AI models with general-purpose TEEs can be complex. Developers often need deep expertise in low-level system programming and cryptography. This complexity can slow down development and increase the risk of implementation errors.

HiSilicon simplifies this process. It provides a comprehensive software development kit (SDK) with high-level APIs.

- Simplified APIs: Developers can secure their models with just a few lines of code. The API handles the complex details of attestation, key exchange, and secure loading.

- Toolchain Support: The development tools integrate seamlessly with popular AI frameworks. This allows developers to build, quantize, and deploy models without leaving their familiar environment.

- Pre-built Secure Components: The SDK includes pre-verified components for common tasks. This reduces the developer's burden and ensures that critical security functions are implemented correctly.

This focus on usability accelerates the development cycle. It empowers AI engineers to build secure applications without becoming security experts. This streamlined workflow makes the advanced security features accessible and practical for a wide range of AI products.

ADDRESSING EMERGING THREATS

A strong security posture requires defenses against a wide range of attacks. HiSilicon’s SoCs incorporate advanced features to address critical threats, from physical tampering to sophisticated software exploits. This proactive approach to cybersecurity is essential for protecting modern IoT devices.

COUNTERMEASURES FOR PHYSICAL ATTACKS

Physical attacks attempt to access a chip’s internal components directly. These attacks are a serious threat to device integrity. Common methods include:

- Reverse engineering to understand the chip's design.

- Microprobing to access internal bus lines with a fine needle.

- Invasive fault injection to disrupt normal operations and extract secrets.

HiSilicon’s design includes robust countermeasures to defeat these attacks. The SoC is protected by an active mesh layer and epoxy encapsulation. The mesh creates a sensitive grid over the chip. Any attempt to breach it triggers an immediate security response, like wiping sensitive memory. This hardware security makes physical tampering extremely difficult.

PROTECTING AGAINST SIDE-CHANNEL ATTACKS

Side-channel attacks do not break encryption directly. Instead, they analyze physical information leakage from the chip. These security and privacy attacks can reveal secrets through careful observation. Simple Power Analysis (SPA) and Differential Power Analysis (DPA) are two examples. Attackers measure the chip’s power consumption to deduce the operations being performed or even the secret keys being used. Effective countermeasures are vital for robust cybersecurity. The SoC uses several techniques for the detection of such activity.

Defensive Techniques The hardware randomizes its operations to obscure power patterns. It also uses constant-time algorithms. These algorithms ensure that cryptographic functions take the same amount of time to execute, regardless of the data. This prevents attackers from gaining insights through timing analysis.

ADDRESSING THE THREAT OF A SYSTEM BREACH

A system breach is a constant concern, especially with the rise of zero-day vulnerabilities. An attacker might compromise the main operating system. HiSilicon’s TEE is designed to protect AI models even in this scenario. The TEE is a hardware-isolated environment. It separates its code and data from the rest of the system. The main OS cannot see or modify anything inside the enclave. This isolation is the core of its cybersecurity defenses.

The system uses real-time monitoring for threat detection. This AI-driven cybersecurity approach helps identify and neutralize zero-day vulnerabilities before they cause a breach. It protects user privacy and the AI model’s intellectual property against zero-day vulnerabilities. This architecture is built to handle zero-day vulnerabilities, a persistent challenge for IoT. The constant monitoring for zero-day vulnerabilities ensures the system adapts to new threats. This focus on zero-day vulnerabilities makes the platform resilient. The detection of zero-day vulnerabilities is a key part of its design.

HiSilicon's "Security by Design" philosophy delivers robust, secure solutions. Its co-designed trusted execution environment protects AI models and user data. The architecture offers superior performance through AI-specific optimization and fast execution. This system provides a blueprint for building trust in next-generation AI and IoT applications.

It secures sensitive data for technologies like federated learning. This protection of all data makes the architecture essential for the future of IoT.

FAQ

What is a Trusted Execution Environment (TEE)?

A Trusted Execution Environment (TEE) is a secure, isolated area on a chip. It protects code and data from the main operating system. This hardware-level separation ensures the confidentiality and integrity of sensitive AI models and user information during processing.

How does this TEE improve AI performance?

The TEE integrates tightly with the AI hardware. This design reduces latency by minimizing context switches. Dedicated cryptographic accelerators also handle encryption efficiently. This combination minimizes performance overhead, making AI tasks faster than on general-purpose TEEs.

Is the system protected from physical attacks?

Yes. The SoC includes robust physical countermeasures. An active mesh layer covers the chip's surface. Any attempt to tamper with this mesh triggers an immediate security response, such as wiping sensitive data. This design effectively protects against invasive attacks.

How does this help AI developers?

HiSilicon provides a software development kit (SDK) with simple APIs. Developers can secure AI models with minimal code. The SDK handles complex security tasks like attestation and key management. This approach accelerates the development cycle for secure AI applications.