Inside HiSilicon AI SoCs Trusted Execution

HiSilicon's trusted execution environment offers hardware-enforced security on its AI system-on-chip (SoC) designs. These TE

HiSilicon's trusted execution environment offers hardware-enforced security on its AI system-on-chip (SoC) designs. These TEEs isolate and protect high-value AI assets. They safeguard AI models, user data, and cryptographic keys. The company follows a "security and privacy by design" principle. This approach builds security directly into the AI SoC. A trusted hardware root establishes system integrity for all AI execution.

💡 This foundational security makes the SoC a reliable platform for sensitive AI applications.

Key Takeaways

- HiSilicon AI SoCs use a 'security by design' approach. This means security is built into the chip from the start. It protects AI models and user data.

- The core of this security is the Trusted Execution Environment (TEE). It uses hardware to create a secure area. This area keeps sensitive AI tasks separate from other parts of the system.

- HiSilicon's TEE uses ARM TrustZone technology. This technology divides the chip into two worlds. The 'Secure World' protects important data. The 'Normal World' runs regular apps.

- A secure boot process ensures only trusted software runs. It starts with a Hardware Root of Trust. This root checks each piece of software before it runs.

- The TEE protects AI models and data from attacks. It keeps them isolated and encrypted. This helps keep AI intellectual property safe and user data private.

Core TEE Architecture

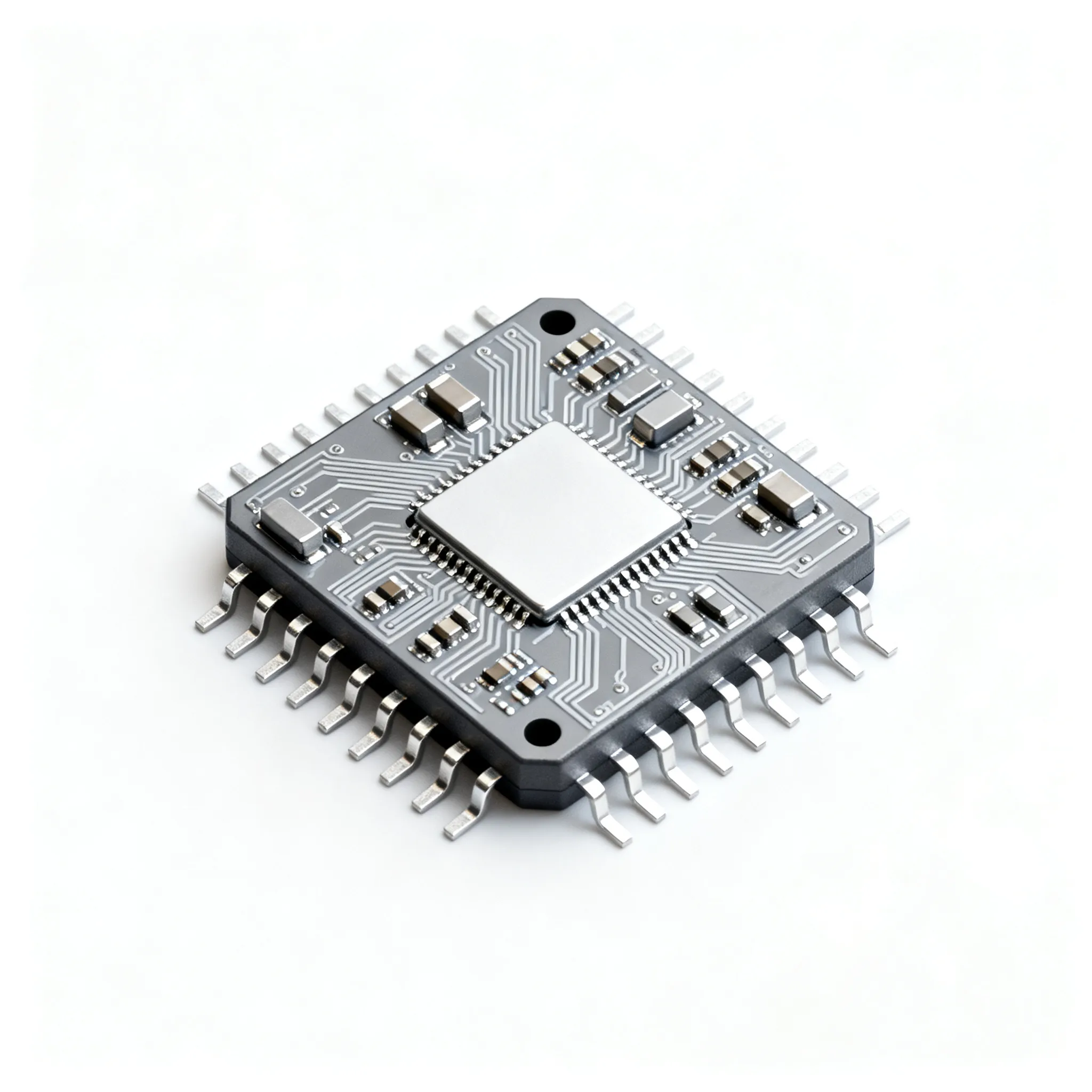

The security of a HiSilicon AI SoC begins at the silicon level. The core architecture creates a fortified environment for all operations. This design philosophy addresses computer security threats from the ground up. It ensures that trust is not an add-on but an intrinsic property of the hardware.

Hardware-Level Isolation

HiSilicon builds its TEE on the foundation of ARM TrustZone technology. This technology is a hardware extension that creates a strong barrier within the processor. It divides the entire system-on-chip into two separate, isolated environments.

- The Normal World: This is where the main operating system (like Linux or Android) and general applications run. It handles everyday tasks for the user.

- The Secure World: This is a protected area for trusted code and sensitive data. It manages assets like cryptographic keys, secure bootloaders, and the AI models themselves.

💡 The ARM TrustZone architecture ensures the Normal World cannot directly access or even see the resources inside the Secure World. This separation is enforced by the hardware, making it a robust defense against software-level attacks.

The SoC dynamically switches between these two worlds. A special instruction, the Secure Monitor Call (SMC), facilitates this transition. This process allows the system to perform secure operations without a significant performance penalty. The processor can run a rich OS in the Normal World and instantly switch to the Secure World to handle a sensitive AI task, then switch back. This hardware-level partitioning is a critical first line of defense against many security and privacy attacks.

The Secure Boot for Trusted Execution

A secure system needs a trusted starting point. The secure boot process establishes a "chain of trust" for all software that runs on the SoC. This process begins with a Hardware Root of Trust (HRoT), which is an immutable component embedded in the silicon. This HRoT holds the initial secure boot code and public keys needed to verify the first piece of software.

The boot sequence follows a strict, multi-stage verification process:

- The system starts execution from the immutable HRoT.

- The HRoT loads the first-stage bootloader and verifies its digital signature.

- If valid, the first-stage bootloader initializes critical hardware. It then loads and verifies the next software component.

- This chain continues, with each stage verifying the next before passing control.

- Finally, the trusted OS kernel and AI applications are loaded and verified. The system only transfers execution control after all checks pass.

This process uses powerful cryptographic techniques to guarantee software integrity and authenticity. These methods are fundamental countermeasures against tampering and unauthorized modifications.

| Crypto Technique | Purpose in Secure Boot |

|---|---|

| Digital Signatures | Guarantees the authenticity and integrity of boot components. |

| Asymmetric Cryptography | Enables signature verification without exposing a secret key on the device. |

| Secure Hash Algorithms | Creates a unique "fingerprint" of the software to detect any changes. |

| Hardware Accelerators | Speed up cryptographic operations to minimize boot time impact. |

This robust boot process addresses many existing vulnerabilities in TEEs by ensuring that only authorized code can run on the device. It effectively blocks attacks that attempt to inject malicious code during startup. Modern AI SoC designs, including chiplet-based architectures, introduce new challenges and potential vulnerabilities. A centralized trust anchor can become a bottleneck or a single point of failure. HiSilicon's approach anticipates these design flaws by moving toward distributed trust models. These advanced solutions establish trust collaboratively among chiplets, enhancing scalability and resilience. This strategy provides effective countermeasures against sophisticated hardware-level attacks and supply chain flaws, ensuring the integrity of the entire AI system. Such secure solutions are vital for the future of trusted AI execution.

Securing AI Models and Data

Protecting AI models and the data they process is a primary goal of the HiSilicon TEE. An AI model is a valuable piece of intellectual property. The data it handles can be highly sensitive. Attackers use sophisticated methods to compromise these assets.

- Model Extraction and Inversion: Attackers can query a model repeatedly to reverse-engineer its architecture or even reconstruct the private data used to train it.

- Adversarial Inputs: Malicious actors can craft special inputs, like stickers on a stop sign or inaudible audio commands, to trick an AI system into making dangerous mistakes.

- -Firmware and Runtime Exploits: Vulnerabilities in the operating system or AI runtimes can give attackers full control, allowing them to steal or tamper with models directly on the device.

HiSilicon's TEE provides hardware-level countermeasures against these threats. It creates a secure vault for all sensitive AI operations on the SoC.

Isolated AI Model Processing

HiSilicon's trusted execution environment ensures that AI models are loaded and run in complete isolation. The entire AI inference process happens inside the Secure World. This prevents any code running in the Normal World, including the main OS, from accessing or modifying the model.

💡 This isolation is achieved using dedicated secure memory regions, often called hardware enclaves. The SoC encrypts all data and code within this region. The processor only decrypts it for use inside the enclave itself. To any outside observer, including privileged system software, the model's parameters and the data it processes remain encrypted and unintelligible.

The HiSilicon Hi3796CV300 SoC is a practical example of this design. This system-on-chip integrates hardware cryptographic accelerators and secure memory pathways. These features allow it to perform AI computations securely without exposing sensitive information to the rest of the system.

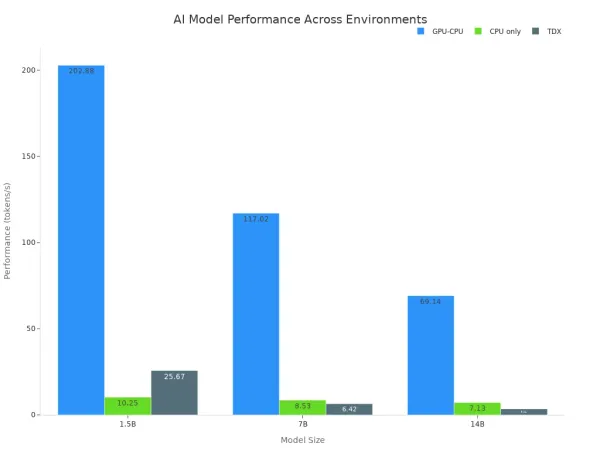

However, this enhanced security involves performance considerations. Running an AI model inside a TEE can introduce overhead compared to running it in an open environment with full GPU access. The encryption and secure data handling processes require additional computational resources.

As the data shows, there is a trade-off between execution speed and security.

| Model Size | GPU-CPU (tokens/s) | CPU only (tokens/s) | TDX (tokens/s) |

|---|---|---|---|

| 1.5B | 202.88 | 10.25 | 25.67 |

| 7B | 117.02 | 8.53 | 6.42 |

| 14B | 69.14 | 7.13 | 3.44 |

While a secure enclave may not match the raw speed of an unsecured GPU, HiSilicon's SoC designs use hardware accelerators to minimize this impact. These solutions offer a balanced approach, delivering strong security for AI workloads with optimized performance. This makes the SoC a viable platform for deploying trustworthy AI.

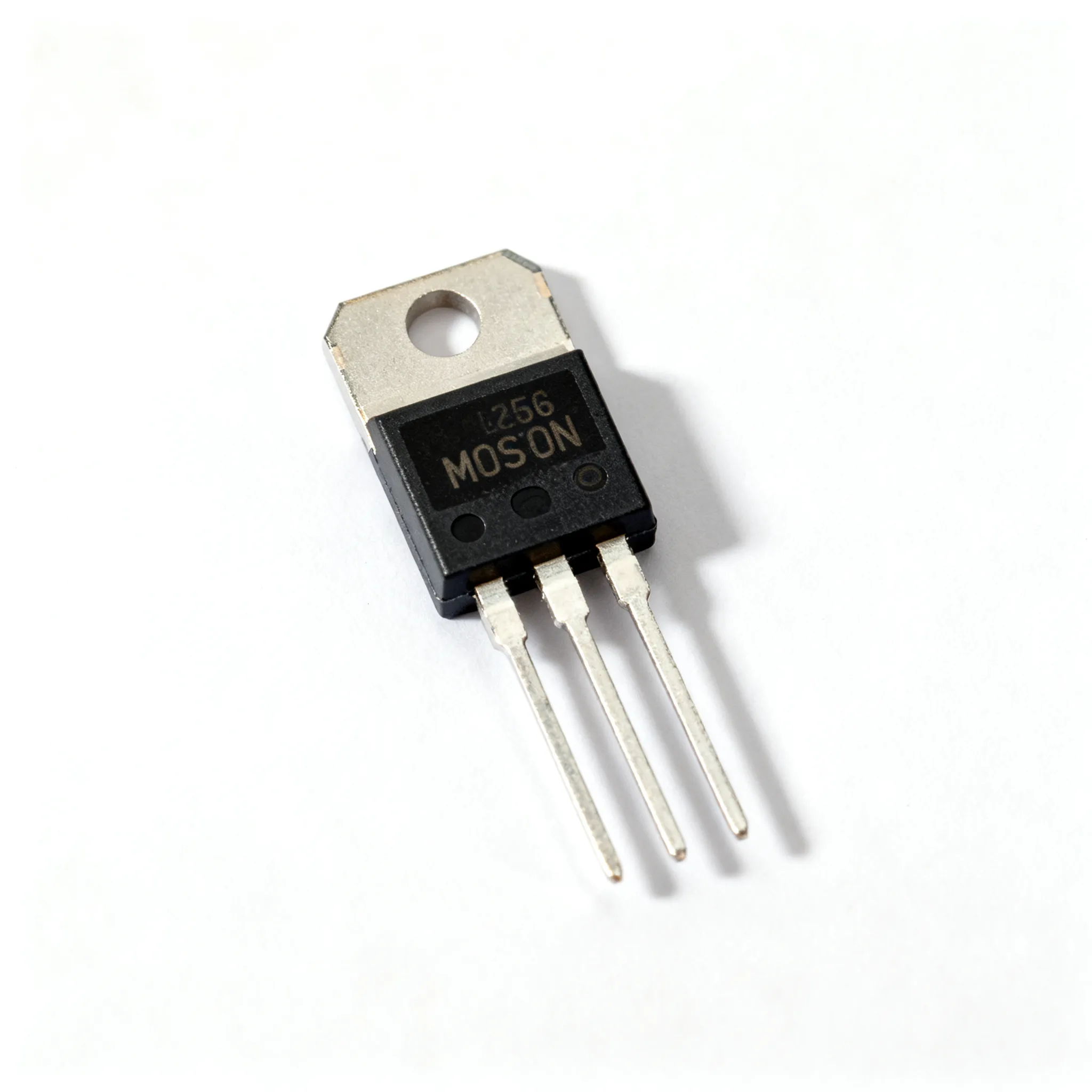

Secure Key Management

Cryptographic keys are the foundation of data security. They encrypt AI models, protect user data, and verify secure communications. A compromised key can undermine the entire security architecture of the SoC. The TEE functions as a hardware-based vault for managing these critical assets.

The HiSilicon SoC generates, stores, and uses cryptographic keys exclusively within the Secure World. Keys are never exposed to the Normal World OS or any applications running there. When an application needs to perform a cryptographic operation, it sends a request to the TEE. The secure execution environment performs the operation internally and returns only the result. The key itself never leaves the protected enclave.

This approach provides robust protection against software-based attacks. Even if the main operating system is compromised by malware, the attacker cannot access the cryptographic keys stored inside the TEE. This hardware isolation is a fundamental advantage over software-only key management solutions.

HiSilicon's key management system aligns with established international security standards. These frameworks provide guidelines for secure key generation, storage, and usage. Key standards include:

- FIPS 140-2: A U.S. government standard for cryptographic modules.

- PCI DSS: A security standard for organizations that handle payment card data.

- ISO/IEC 27001: An international standard for information security management.

- GDPR: A regulation focused on data protection and privacy for individuals in the EU.

By adhering to these rigorous standards, the HiSilicon AI SoC provides a verifiable and trustworthy foundation for building secure applications.

The Role of Trusted Execution Environments

Trusted execution environments play a vital role in securing modern AI systems. They provide verifiable hardware protection for critical assets. This protection extends beyond the SoC to the entire AI lifecycle, from development to deployment.

Protecting AI Intellectual Property

An AI model is a valuable piece of intellectual property. Its theft can lead to significant financial losses, sometimes costing companies hundreds of millions in lost revenue. HiSilicon's trusted execution environment protects this IP. It creates a secure enclave where the AI model is encrypted and isolated.

💡 This hardware-level isolation prevents unauthorized access or reverse engineering. The model's weights and architecture remain confidential, even from the device's main operating system. Only authorized AI applications can execute the model inside the protected environment.

This approach safeguards a company's competitive advantage. It ensures that the investment in AI development is not compromised by theft or illegal replication.

Ensuring Data Integrity and Privacy

The TEE is essential for maintaining the integrity and privacy of data used by AI systems. Attackers can use techniques like data poisoning to corrupt training data. This can cause an AI model to make dangerous errors. TEEs prevent such tampering by processing data within a protected space.

Data privacy is another major concern. Regulations like GDPR require strong protection for personal information. The trusted execution environment ensures that sensitive user data remains encrypted and confidential throughout the AI inference process.

- Data enters the enclave in an encrypted state.

- The AI model processes it inside the secure environment.

- The results are returned without exposing the raw data.

This process helps companies comply with strict privacy laws while leveraging powerful AI capabilities.

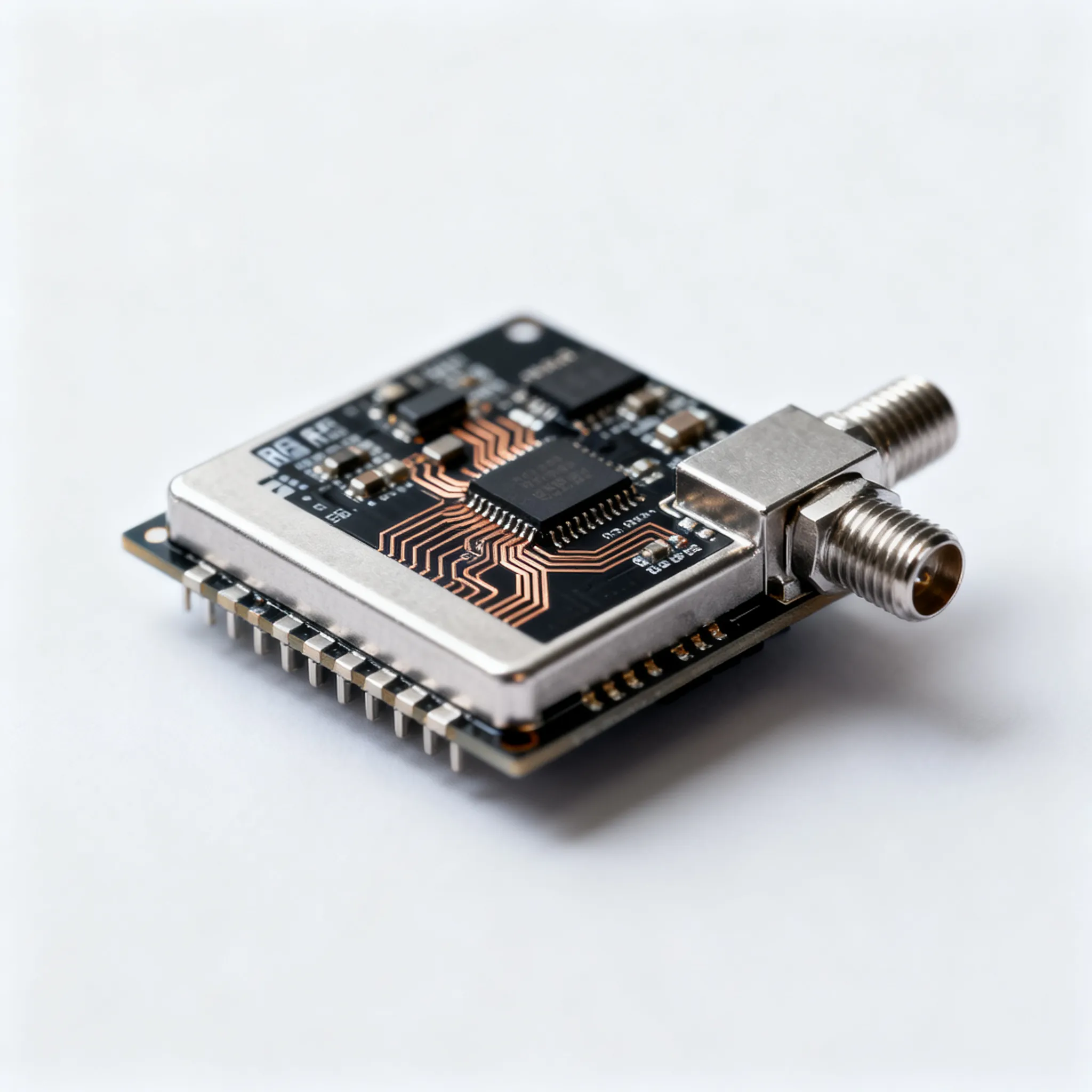

Enabling Secure OTA Updates

Secure Over-the-Air (OTA) updates are critical for maintaining device security over time. Insecure update mechanisms create a major vulnerability. Attackers can push malicious firmware to take control of a device.

TEEs provide a robust solution for secure updates. Before installing a new firmware package, the TEE performs several checks:

- It verifies the update's digital signature to confirm its authenticity.

- It checks the version number to prevent rollback attacks to older, vulnerable software.

The system only proceeds with the update if all checks pass. This ensures that only legitimate and authorized code can run on the device, protecting the AI system from compromise.

HiSilicon's "Security by Design" strategy embeds trust directly into its AI system-on-chip. This approach provides verifiable, hardware-backed protection for AI models and sensitive data. The trusted execution environment on the AI SoC enables true end-to-end protection for AI workloads. This hardware-first method on the AI SoC offers distinct advantages over software-only solutions.

- Hardware TEEs provide stronger protection for AI assets.

- Software solutions can be faster for light tasks but have known limitations.

This robust foundation makes the HiSilicon AI SoC a critical enabler for trustworthy AI on edge devices, including future 2025 AI SoC designs.

FAQ

What is a Trusted Execution Environment (TEE)?

A Trusted Execution Environment is a secure area on the SoC. Hardware creates this zone. It isolates and protects sensitive code and data from the main operating system. This design provides a foundation for secure AI applications.

How does the TEE protect AI models?

The TEE loads and runs AI models inside the isolated Secure World. It encrypts the model's data. This process prevents the main OS from accessing or tampering with the valuable AI intellectual property.

Does using a TEE slow down AI performance?

Running tasks in a TEE can add some overhead due to security processes. HiSilicon AI SoCs use dedicated hardware accelerators. These accelerators minimize the performance impact, balancing security with speed for AI workloads.

What is a Hardware Root of Trust (HRoT)?

A Hardware Root of Trust is an unchangeable security component built into the silicon. It holds the initial code and keys. The HRoT starts the secure boot process, creating a chain of trust for all software.